Emotion Transfer Protocol

Experiments in emotion transmission

Valtteri Wikström

2015

I Background

This first part reviews the background of the project and the fundamentals of emotions in science and technology. The first chapter, Emotion transfer protocol - a draft begins with an explanation of the context of this thesis and the background of the problem from a social, technological and scientific point of view. The second chapter, What are emotions? explains the theoretical background of emotions, trying to present multiple different approaches, as no single answer exists that can answer the question in the chapter title.

1 Emotion transfer protocol - a draft

The argument that underlies this work is that the current state of communication over the internet does not offer neither a natural nor optimal way of conferring emotions and therefore inhibits the most important precursor of rich and functional interaction: empathy. At the same time, because of this several areas of the internet can and should be improved. The benefits of creating more possibilities for emotion transmission and ultimately greater empathy are manifold: more emotional bandwidth can improve communication on a personal and business level, natural emotional interaction will support healthy development of socio-emotional skills in children, who are exposed to increasing amounts of technologically mediated communication. The positive aspects are not limited to children, as emotional communication is in many forms more universal than language, and many interpersonal and international misunderstandings can be alleviated if the emotional pathway is cleared. Furthermore, new ways of expression and still unimaginable forms of art and applications can be built by harnessing the emotion transmission channel.

In this master’s thesis, I explore and create a proposal on the possibilities of using affective technologies for enriching online communication. This thesis can be used as a guidebook for practical approaches to emotions in digital projects, especially for interaction designers and media artists. It is also a preliminary proposal for a new internet protocol: the emotion transfer protocol (ETP) but the way the protocol is presented is not meant to be a formal definition, but a collection of possibilities for using emotions and especially empathy as material.

In this first part I introduce the problem and explain the context for this work. Next, I move on to different scientific theories of emotion, and explore their practical and theoretical value. In the second part I approach the drafting of a new protocol by giving an overview of current practices for emotion recognition, transmission and presentation, with the aim of giving practical guidelines and presenting tools that can be used for creating emotionally aware applications and experiments. In the last part I present my own work in this area and reflect on the learnings and outcomes of three distinct approaches.

The problem of the existence of empathy online is technically threefold: we need to read either deliberate or unconscious emotions of the sender (Input), present emotions to the recipient in a meaningful way (Output) and transfer emotions while trying to transform the emotionally relevant input into emotionally meaningful output without loosing information in the process (Transmission). Input, Output and Transmission are approached in their own separate chapters. Apart from technical challenges, a hot potato is the actual nature of emotions, which I approach from the point of view of psychological theory, especially appraisal theory and explanatory frameworks: such as dimensional emotions, basic emotions, and their derivatives. Taking current scientific understanding regarding emotions into consideration is essential in building good applications.

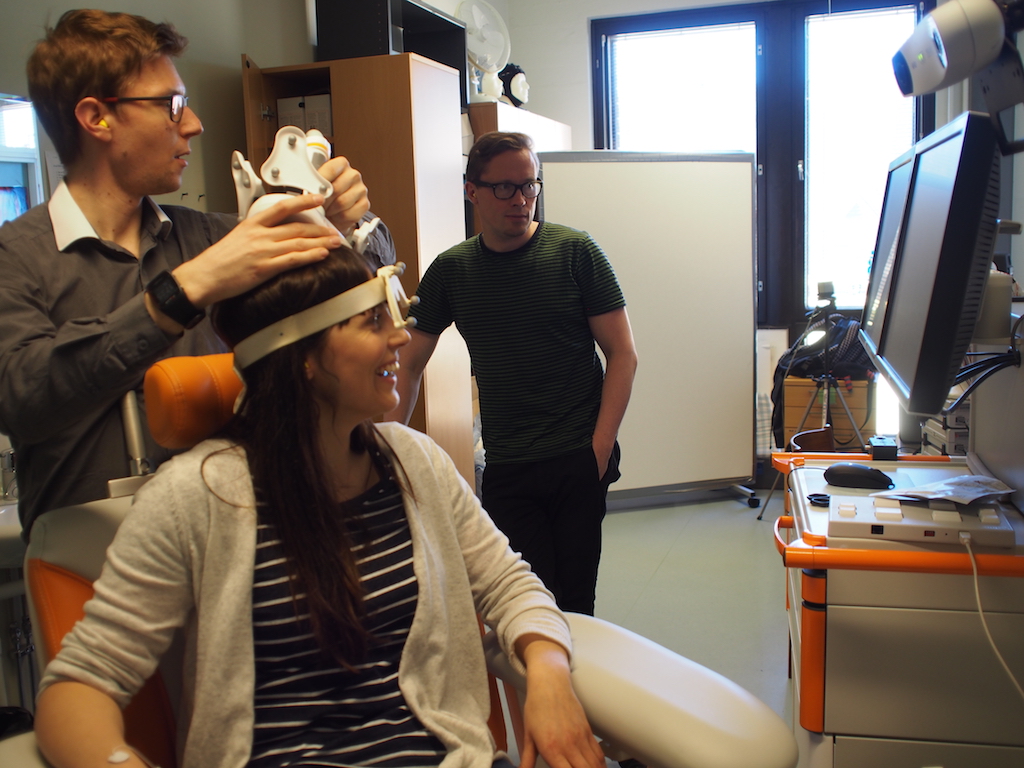

1.1 Finding NEMO

I discovered this topic for my thesis in the context of a collaboration between neuroscientists at Helsinki University, researchers at Teosto, forward thinkers and children’s media specialists from the Finnish National Broadcasting Company YLE, and myself. We are involved in a project Natural Emotionality in Digital Interaction (shortened NEMO), participating in the Helsinki University 375 year idea competition Helsinki Challenge. The purpose of our project is to find ways to widen the emotional bandwidth in digital media and digital communications. One part of the motivation is to ensure children’s development into emotionally mature adults in an environment where daily screen time keeps increasing. There is increasing concern that as children spend time in environments that lack possibilities for natural emotion expression and transmission, the development of socio-emotional skills will be impaired (Konrath, O’Brien, and Hsing 2010; Radesky, Schumacher, and Zuckerman 2015). However, no longitudinal studies to date have examined whether or not such a problem actually exists, and therefore answering this question is also one of the focus areas of the team. Another, more general reason, is improving communication quality and finding new areas for applications, art and games by creating a better emotional communication channel.

As a part of the contest, we were sent out to isolation at a resort hotel on the rural coast of Finland, to explore the possible dimensions of the impact of our idea. While taking a walk in the area, our team came up with reasons for the importance of empathy on the internet, ways to transmit emotions, ideas for experiments and some funny concepts, such as EmoTinder, an application that lets users find matches that naturally mirror their facial expressions. At this point, we stopped in a forest, and our team member Wesa Aapro exclaimed that we were not thinking big enough to make a profound impact. He suggested that we should apply one of his own thought devices, the “Big Story”: which he explained as basically taking a silly unrealistic idea and blowing it so much out of proportion that it actually becomes credible – too crazy to be doubted. After a brief discussion, ETP was born. Not being one to shy away from a crazy idea, I decided to write my master’s thesis about it.

My role in the project is to act in the cross section of interaction design, affective neuroscience and media technology. Having worked in the Cognitive Brain Research Unit for several years, but lately focusing on interactive media, both experimentally, academically and as an entrepreneur, I have the necessary background to work on the bridge between scientific methodology, and practical or artistic applications. In this thesis I decided to tackle the drafting of ETP from my own point of view, receiving feedback from the NEMO team leader and my thesis supervisor Katri Saarikivi and trying to incorporate the ideas we have discussed during the project. The background theory and proposed solutions are wholly my personal work – I hope this thesis can serve as a discussion opener for the NEMO project, and I expect the thesis itself to evolve as the project progresses. Because of this I am writing primarily for web, and an updated version of the work can always be found at http://vatte.github.io/etp/.

1.2 The problem

A problem exists in digital communications. While the possibilities for transmitting meaning, facts and informational content online are efficient and diverse, emotional content is often lacking in quality and richness. It tends to get overly simplified: a realistic assessment and understanding of the conversational partners moods, feelings and emotional reactions often does not occur. Due to this lack of emotional information, achieving natural empathy online seems to be difficult. As a somewhat naive but easy example, expressing condolences to your friend for the loss of a loved one as a post on Facebook is just not the same as expressing this in person – a lot of emotional information will be missing. This is not to say that empathy does not exist online. Real empathic experiences are typical for certain online communities, especially those related to support in traumatic life situations, such as illness (Preece and Ghozati 2001). In these situations people can find a strong support network and a feeling of togetherness in a community without any sort of face-to-face interaction. Meanwhile, other internet communication platforms are characterized by anti-social behavior especially evident in un-empathic and aggressive online conversational phenomena, such as flaming and trolling on YouTube (Moor, Heuvelman, and Verleur 2010). These extreme examples of lack of empathy online make laypeople claim that there is a lot of emotion online and that we could do with less of it. My point of view is that the problem is not that people do not attempt to express their emotions online but that the quality of the means we have for expression is poor.

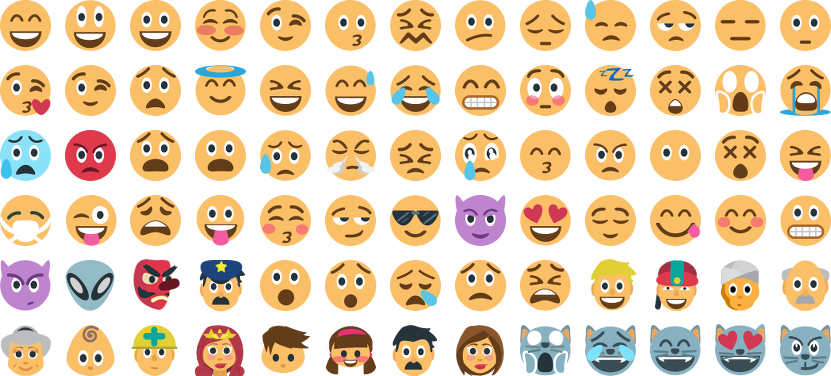

Textual communication lacks many of the cues and subtleties that make face-to-face communication so effective. To combat this, users have adopted new strategies to substitute physical cues in online settings (Reid 1993). Some of these are very creative, coming in the form of acronyms, new idioms, emoticons and emojis, and they are further discussed in the Representations section of the Output chapter of this thesis. Derks, Fischer, and Bos (2008) argue that even though emotions are very common in computer-mediated communication (CMC), and some forms of CMC even seem to reinforce them, there are most likely differences in the strength of the experienced emotions. Another interesting problem noted by Derks, Fischer, and Bos (2008) is that emotional communication online is usually extremely controllable, and as such may not represent the true emotion felt by the sender of the message. It is imaginable that people hit the like button on Facebook without actually experiencing a positive emotion at that moment: for example when liking a birthday wish while experiencing anxiety about getting older. If the emotional impulse is not preserved in the same way in the message, as felt by the sender, it can hinder real empathy between the sender and the receiver of the message.

A trend in CMC is an overestimation of our ability to interpret the intention of the sender correctly, combined with an often overly negative interpretation of the intended emotion by the recipient, especially when the explicit emotional information is low (Kruger et al. 2005; Y. Kato, Kato, and Akahori 2007). Kruger et al. (2005) suggest that this discrepancy stems from an egocentric point-of-view: the sender can imagine their message to have, for example, a certain tone of voice, but they are unable to accurately assess whether the intended message will be apparent to the receiver. Essentially we are not able to place ourselves in the position of our conversational partners, and evaluate their ability to understand our intended meaning as successfully as in real life.

The problem is not only in efficient communication; parents are worried about their children’s development into emotionally mature adults, and many of them are limiting their child’s time spent on personal computers and mobile devices. Interestingly, this seems to be a trend among Silicon Valley executives, for example the late Apple-CEO Steve Jobs is described as a low-tech parent (Bilton 2014), limiting the use of mobile devices in the household. A global trend, however, is that face-to-face communication is in a decline, and people prefer online communications increasingly to personal encounters (D. Russell 2015). The total amount of time spent using a screen-based electronic communications device, or screen time, has been linked with physical as well as psychosocial development and health issues, but also with positive aspects such as higher intelligence and better computer literacy (Subrahmanyam et al. 2000; Richards et al. 2010).

Some might argue that the ways that people interact have always evolved and that CMC is just another form of interaction that has its own characteristics. Perhaps children, who grow up to understand the peculiarities and special features of CMC will become adept at expressing themselves in the ways that are possible in these environments. As the evolution of human cognition follow the evolution of technology, maybe the decline of traditional empathetic skills (Konrath, O’Brien, and Hsing 2010) is nothing to worry about. While there may be some truth to this assessment, it would be hard to argue that the interactive capabilities of computers have already fully harnessed the power of the human body and mind. In any case making CMC more natural and able to take advantage of the built-in empathic circuitry of the human brain while developing these technologies can only have a positive effect on the quality of online communication.

Meanwhile, we are living in an age where the nature and structure of work is rapidly changing to fit a post-digital society. The effect of automation on a large quantity of low-skill jobs is expected to be considerable in industrialized countries, due to large employment sectors that are possible to be replaced by or made much more effective with digitalization. It is expected that not only manual labor and simple problem-solving, but also increasingly cognitive tasks can be automated in the future, as computing power increases and sophisticated methods for processing large amounts of data are developed. When exploring the extent of problem-solving in which computers surpass human cognition, it seems that the most important areas where people are still “unbeatable” are the realms of social cognition and creative thinking. Relying on neural processes such as mirroring, people make use of their subjective experience of themselves in understanding the emotional states of others. It is somewhat inconceivable that this type of processing could be automated, and therefore it is expected that the work people will concentrate on will emphasize socio-emotional skills. (Florida 2013; Pajarinen et al. 2015)

Generations may now be growing up in digital environments that are suboptimal for development of skills that are essential for future work quality. As interpersonal communication and socio-emotional understanding become increasingly important skills – not only in social life, but also in employment – developing natural communication skills has a large effect on economy and well-being on a large scale.

1.3 Affective computing

Affective computing is a field of human-computer interaction concerned to the study and development of computers that can recognize, process and simulate emotions. Since being introduced at MIT Media Lab in the mid-1990’s by Rosalind Picard, the field has acted on a lack of consideration for human emotions in the design of interactive computer systems. In the book Affective Computing, Picard (2000) argues that computers, as a result of the extremely logical structure of the underlying technology, have been mostly designed to act completely rationally and logically, in a way that produces the same result each time a certain set of actions are completed. This would seem like a good idea at first, but a completely rational and logical system is actually a very unnatural way for humans to communicate. The argument is that by not taking into account the complexities of human communicational behavior, especially regarding emotions, we are actually creating illogical and inefficient computer systems. If the functioning of the human brain is thought to be a pinnacle of problem-solving and a model for intelligent computers, dismissing emotional processing is ill-founded. In cognitive neuroscience there is a growing body of research showing that emotions continuously interact with cognitive functions such as memory and attention, and that areas of the brain associated with emotions play an important role also in cognitive processes (Pessoa 2008; Lindquist et al. 2012).

The field of affective computing has been especially concerned with the artificial intelligence of computers, or their artificial emotionality. By sensing the emotions of the user, computer programs can adapt to the users actions. Effectively using emotion in the adaptation is not trivial, and there is a risk of annoying the user if the emotional behavior of the computer is overly simplified. A related field, or a trend within affective computing, is known as affective interaction, which is more concerned with how emotional meaning is created and how it evolves in the interaction design process. The difference is that affective computing of the MIT variety has taken a very cognitivist, perhaps even reductionist approach to quantifying emotion, whereas the affective interaction movement has a more traditional design approach, considering emotions from the point of view of experience and phenomenology. (Höök 2013)

The topic and content of this thesis draws a lot of inspiration from the affective computing field, but it does not attempt to tackle issues relating to the design of affectively intelligent systems. Instead, the focus is on how to transmit emotions between two human users of communication devices. Therefore, the artificial intelligence and correct emotional behavior of the computer is not taken into consideration. The focus is rather on the optimal extraction and transmission of emotional content, its codification and ways of representing emotional content to the receiver.

1.4 About telepresence

As complete as possible simulation of physical presence, or telepresence, is sort of the holy grail of communication technology. In some form, it was already imagined at least as early as 1877, when the New York Sun wrote an article about a device known as “The Electroscope” (New York Sun 1877). An early illustration from 1910 by a French artist Villemard is shown in Figure 1. The benefits of telepresence to other forms of remote communication are obvious; as perfect as the simulation can get, the more indistinguishable the communication can be from physical reality. Douglas Engelbart (1968), in his classic “Mother of All Demos”, showed an early working version of video communication and collaborative working, demonstrating how he and a colleague could simultaneously work on a document, while seeing a camera feed from each other on the same display, while hearing each others voices. The demonstration had some minor issues with the video and voice feed being unreliable at times, but to anyone using current video conferencing tools it might seem ironical that we have not been able to work out those issues completely even now.

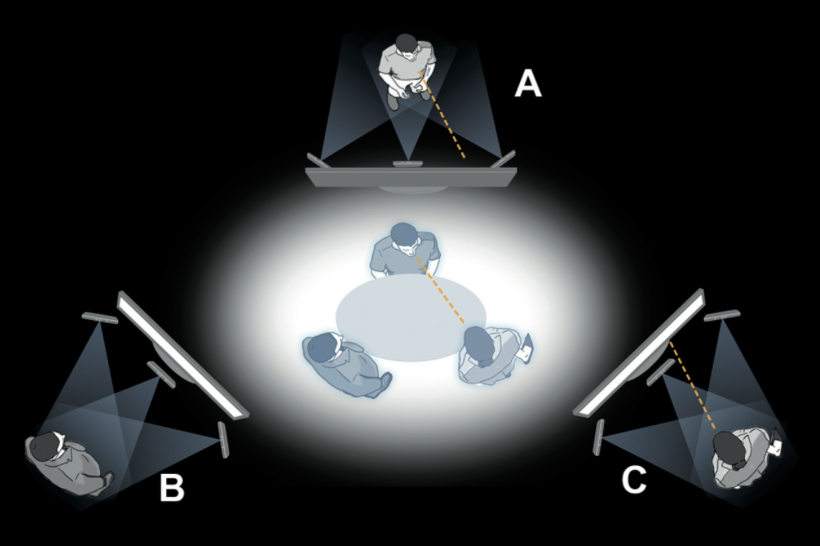

Achieving complete telepresence is difficult, and the setup for reaching state-of-the-art is often not feasible or even desired (Figure 2). Instead, popular solutions are Skype, Google Hangouts, Facetime, or similar video conferencing platforms. Seeing your partner’s face while discussing is a significant advance towards natural communication from voice-only communication, but the solutions are far from perfect. The lack of shared context and physical space, and the latency in video transmission and network transfer technologies are bottlenecks that are hard to solve. The speed of light itself limits the round-trip latency from Helsinki, Finland to Wellington, New Zealand to 104 milliseconds measured on the earth’s surface. Latency in video and audio transmission is not only irritating to the counterparts of interaction but may also interfere with synchronization between the people involved. Synchronization on the level of body movement, speech rate as well as brain rhythms has been connected to better cooperation (Stephens, Silbert, and Hasson 2010; Jiang et al. 2012). There is also a distinct lack of stimulation of senses such as smell and touch. Even with video, the lack of presence from a static camera, and with audio, the poor quality of sound transmitted from a single microphone are problems. A possibility outlined in this thesis is to augment the emotional channel with other data sources than video and voice.

2 What are emotions?

To approach the topic of transferring emotions and emotional communication, understanding of emotions on conceptual, scientific and practical levels needs to be formed. The fields of affective science and affective neuroscience have gathered a vast amount of knowledge and tools, and I will go over some popular theories and frameworks. Having a better understanding of these concepts will help in creating emotionally meaningful applications, and some of the theories are interesting in their own right. From an interaction designer’s perspective different theories of emotion and the emotional frameworks used to discuss and quantify emotions offer interesting starting points for concepts and designs. Taking a framework as a basis for an emotional concept or design can help in making it meaningful, and the other way around; design concepts can be used to test the usefulness of the different emotional frameworks.

The word emotion itself can be understood in a variety of ways, and it is used colloquially for a range of things varying from mild to intense, simple to complex, brief to extended, and private to public. In the science of emotion, or affective science, different words are used to distinguish different, related phenomena (J. J. Gross 2010):

Affect is a wide umbrella word, often used to encompass the different phenomena concerning valenced, i.e. positive and negative, internal states.

Attitudes are the most stable beliefs held by an individual about the valence of things.

Moods are passing and more long-term states, often not directly or simply related to a specific cause.

Emotions are the most short-lived reactions and responses to events and situations, reflecting the current goals, attitudes and mood of the individual, and they work to appraise the situation. Emotions can further be explained as the conscious feeling, the behavior the emotion causes, and its physiological manifestation.

Even these definitions are disputed (J. J. Gross 2010), but I will follow them as a guideline when using these terms in this thesis.

2.1 Theories of emotion

Theories of emotion are a topic of psychology and philosophy, and are related to the nature of emotions, their evolution and the meaning of emotions. A consensus of the topic does not exist between psychologists, indeed it has been described as the most open and confused chapter in the history of psychology, with over 90 definitions of emotion having been proposed during the 20th century (Plutchik 2001). The topic is to some extent overly theoretical from the point of view of interaction design, and I will try not to go into too much detail. In any case, it is important to have an overarching understanding of what emotions most likely actually are, or at least what kind of conceptual devices are meaningful from a scientific point of view to better understand how emotions can be used in design.

The most intuitive idea is usually that emotions are the same thing as feelings, and this is referred to as the common-sense theory of emotions (James 1884). We tend to expect emotions to have a mental basis. This line of thinking goes that an emotion occurs in our mind, possibly caused by external sensory input, and the occurrence of our emotion in turn makes us and our bodies to react in a certain way. An example of this line of thinking is meeting a friend makes us happy, which in turn leads us to make a smile with our mouth and we let out a joyous sound, in that order. Or we are sad because we lost a relative, so we start to cry. Scientific theories of emotion have been divided between the relative importance of the unconscious and the body, and the importance of conscious feelings in the whole emotional experience, and also whether the unconscious emotion, bodily reaction, or the conscious feeling happens first. The theories explained below form a timeline of emotion research starting from the 19th century and ending in present day. This history has seen a shift in the general scientific opinion, back and forth between the two main schools of thought: does the bodily reaction preceed the conscious feeling, or the other way around. As no consensus has been reached, both of these viewpoints can be used as guidance when looking at different possibilities on how to approach emotion transmission.

2.1.1 James’ theory

William James (1884) was among the first scientists to attempt to create a psychophysiological theory of emotions. He was interested in the fact that emotions seem to produce a physical, bodily sensation, and that this sensation seems to be strongly characteristic for different emotions. Rather than the intuitive conclusion that the bodily reaction rises from the mental feeling, James proposed that “the bodily changes follow directly the PERCEPTION of the exciting fact, and that our feeling of the same changes as they occur IS the emotion.” This type of body-first theories are now known as somatic theories of emotion. This radical way of thinking suggested that the body had a more important role than previously thought, giving a central role to the perceived bodily sensation, the embodiment of the emotion. James’ theory was also a shift toward a more evolutionary way of thinking about how psychophysiology and the brain functions, drawing inspiration from, among others, Darwin’s (1872) work on emotion expression. In his essay, James managed to make convincing introspective experiments to support his claim, ranging from the surprising sensation of shivers while experiencing art, to the immediateness of the bodily flight-or-fight response when perceiving surprising movement in a dark forest, and the immediate reaction to common phobia: for example a small boy fainting when seeing blood for the first time. James does acknowledge that some emotions seem to arise from thought processes, and he distinguishes “standard” emotions from moral, intellectual, and aesthetic feelings.

James’ theory was influential, but it left a gap in the whole picture, especially considering the variety and complexity of emotions, and the differences in emotional experiences between individuals and their origins. The Cannon-Bard theory of emotion was created as an alternative to address some of these problems, stating that an event simultaneously causes a physiological response and a conscious emotion (Cannon 1927). The theory poses that emotional reactions in the body, and emotional feelings are simultaneous and independent processes, and that they originate in different areas of the thalamic region of the brain. Cannon’s and Bard’s main issues with James’ theory are the need for bodily reactions for emotional changes, and doubt about the physiological specificity of emotions in comparison to other phenomena, such as physical exercise.

2.1.2 Cognitive appraisal

The Schachter-Singer theory, also known as the two-factor theory, suggests that an event does cause a physiological response (Schachter and Singer 1962). According to Schachter & Singer, the physiological responses in themselves do not distinguish between emotions, and a situational context is necessary for the conscious feeling. The identification of the cause of the physiological response is in the center of this theory, and that causes us to label the emotion, while the physiological response informs us of the intensity of the emotion. This theory was backed up with a clever experiment, in which test subjects were injected with epinephrine, causing physiological arousal. The subjects reported different emotional states depending on situational factors, and when no emotionally significant situational factors were present, they attributed their feelings to cognitions. The two-factor theory has been criticized, and methodology of the experiment has not survived scrutiny regarding whether the injection of epinephrine had any effect on the induced emotion in the first place, as in another experiment no difference was found in the reported reactions under the influence of epinephrine as opposed to a placebo condition (Marshall and Zimbardo 1979).

While failing to explain certain phenomena, the Schachter-Singer theory inspired an influential set of theories known as the cognitive appraisal theories of emotion. Appraisal theories take the view that the experience of emotion is based on the appraisal of an event. Labeling the event with a certain emotion triggers the emotion and the accompanying physiological response. In this theory, the cognitive appraisal always comes first, and only after this appraisal can a bodily response be felt. Without a cognitive appraisal, no emotion is felt: the emotion always arises from the appraisal. Appraisal theories, and the Schachter-Singer theory are cognitivist theories of emotion, placing a lot of importance on the conscious, cognitive processes, and less on the sub-conscious and somatic experience. The credit of appraisal theories is that they are able to explain different kinds of emotional phenomena, both emotions that arise from thoughts and events, as well as individual differences to the same stimulus. Appraisal theories have been most popular among psychologists, partly thanks to influential work by Richard Lazarus (1982).

2.1.3 Somatic theories

Lately, a modern set of somatic theories have surfaced, and managed to stir up discussion. Damasio’s (1996) somatic marker hypothesis holds that bodily sensations are stored in the brain and evoked in the ventromedial prefrontal cortex, producing a similar sensation based on what bodily sensations have accompanied an event in the past. Emotional experiences can, according to this view, be divided into primary and secondary inducers: primary inducers produce the bodily sensation, and secondary inducers trigger a similar bodily sensation based on the memory imprint caused by primary inducers. Prinz (2004) defends the view that a bodily reaction occurs immediately following a perception, and that the bodily state itself is the emotion. According to Prinz the conscious process is actually what the emotion represents. For example a perception of a snake causes the bodily sensation of fear, which in turn is represented in the conscious mind as danger.

Dimberg, Thunberg, and Elmehed (2000) showed in an experiment that emotional contagion works on an unconscious level: by showing pictures of faces expressing an emotion, his test subjects would report to feel the same emotion, even when the images were shown for such a short time that the test subject could not describe what he saw. The result of Dimberg’s experiments can be seen as counter-evidence against cognitive appraisal theories, especially views that consider that appraisal needs to be a conscious process – there has to be a mechanism that produces this emotional contagion effect happening faster and irrespective of a conscious evaluation of the context. Another interesting experiment by Strack, Martin, and Stepper (1988) divided subject’s into three groups and showed them cartoons: one group held a pen in their mouth to keep the mouth contracted, one group held the pen between their teeth forcing a smile, and one group held the pen in their hands. The results were that the smile-group rated the cartoons to be funniest, the contracted-mouth group rated them to be least funny, and the ratings of the hand-group were in between.

The specificity of psychophysiological patterns between different emotions and the evolutionary basis of these patterns enjoys strong experimental support (Arnold 1945; Ekman, Levenson, and Friesen 1983; Levenson et al. 1992; Picard and Daily 2005). This does not really lend definite proof for or against the different theories of emotion, but it offers some evidence against the strictest cognitivist views, as different emotions do produce different physiological responses. This can not be used as an indicator for the order of the mechanism, whether or not the body reacts after a cognitive appraisal, or whether it reacts directly to the stimulus and is involved in the communication between the emotional parts of the brain and the cognitive parts, like somatic theories suggest. On the other hand, knowledge of this specificity is definitely useful in that it allows for the use of peripheral physiology, i.e. also other parts of the body than the brain, as inputs for emotionally meaningful information.

2.2 Emotional frameworks

To practically understand and utilize emotions in research and applications, a framework for describing emotions is needed. Typically, emotions are either divided into distinct categories or mapped out dimensionally. These frameworks can be used as practical tools for reporting and representing emotions.

2.2.1 Categorical emotions

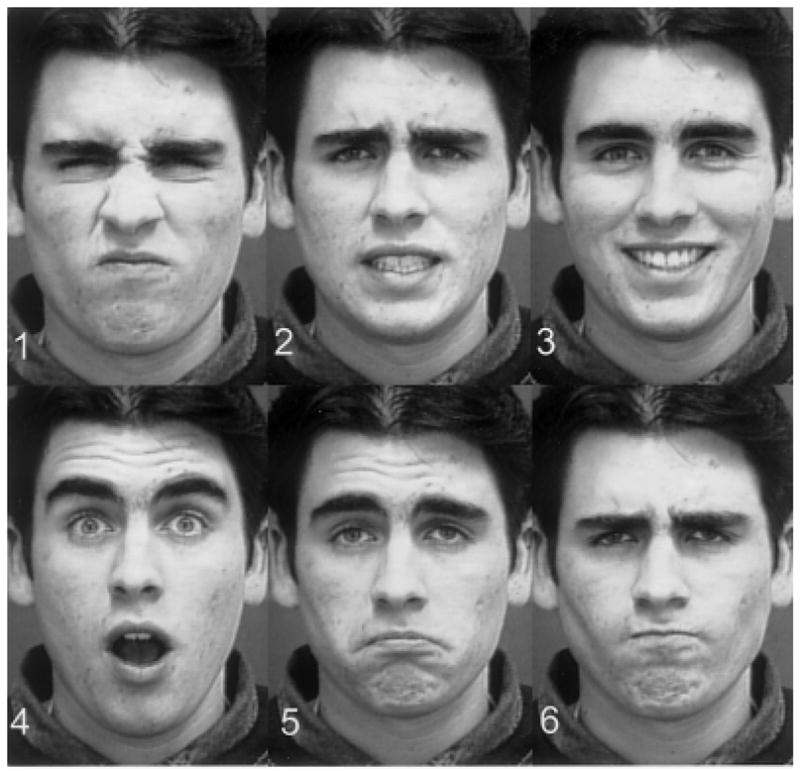

Categorical, discrete emotions is a conceptual tool for working with emotions, as well as a scientific theory of a set of basic, universal emotions. Beginning with Charles Darwin’s book The Expression of the Emotions in Man and Animals, this theory holds that a set of emotions are biologically determined, culturally universal and not necessarily unique to humans (Darwin 1872). Ekman et al. (1987) have provided experimental evidence that a set of basic emotions and their accompanying facial expressions are perceived the same universally across cultures. Ekman’s view on emotions is that a set of basic emotion families exists, each family containing a set of similar states. This viewpoint also holds that the borders of each emotion are very clear and not at all fuzzy, that the basic emotions exist separate from each other both in expression and physiology (Ekman 1992).

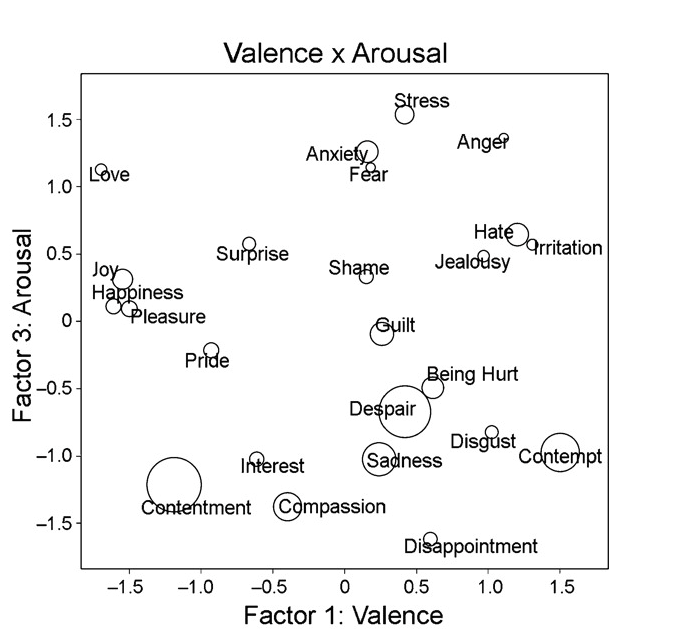

The exact set and amount of basic emotions is debated, and no clear solution exists to determine the best set. Ekman (1992) proposes six basic emotions: happiness, surprise, sadness, fear, disgust and anger based on the basis of distinct facial expressions, as seen in Figure 3. Ekman also proposes a set of criteria for what is required from a basic emotion, but acknowledges also that it is not simple to leave out certain emotions, and that all of the big six do not fill the criteria equally. In any case, these six prototype emotions are widely used, with sometimes contempt added as a seventh emotion. Other frameworks propose much wider sets of emotion categories, for example J. R. Fontaine et al. (2007) identified 24 emotion terms that are commonly found in emotion research and everyday language, which are mapped onto the 2-dimensional emotion space in Figure 4.

2.2.2 Dimensional emotions

Another way to look at emotions and to take their complexity into consideration is dimensional mapping. Taking one or more dimensions, emotions can be mapped out even without labeling them explicitly. Finding the right dimensions is not easy. One possibility is to simply rate how much of certain basic or categorical emotions are present, which is in a way a mixture between categorical and dimensional models. This is not often practical or desired due to the unavoidably large amount of dimensions: already six if only the most basic emotions are used. Another option is to take a set of dimensions that best distinguish emotions, typically at least the valence dimension, i.e. happy–sad or pleasurable–unpleasurable.

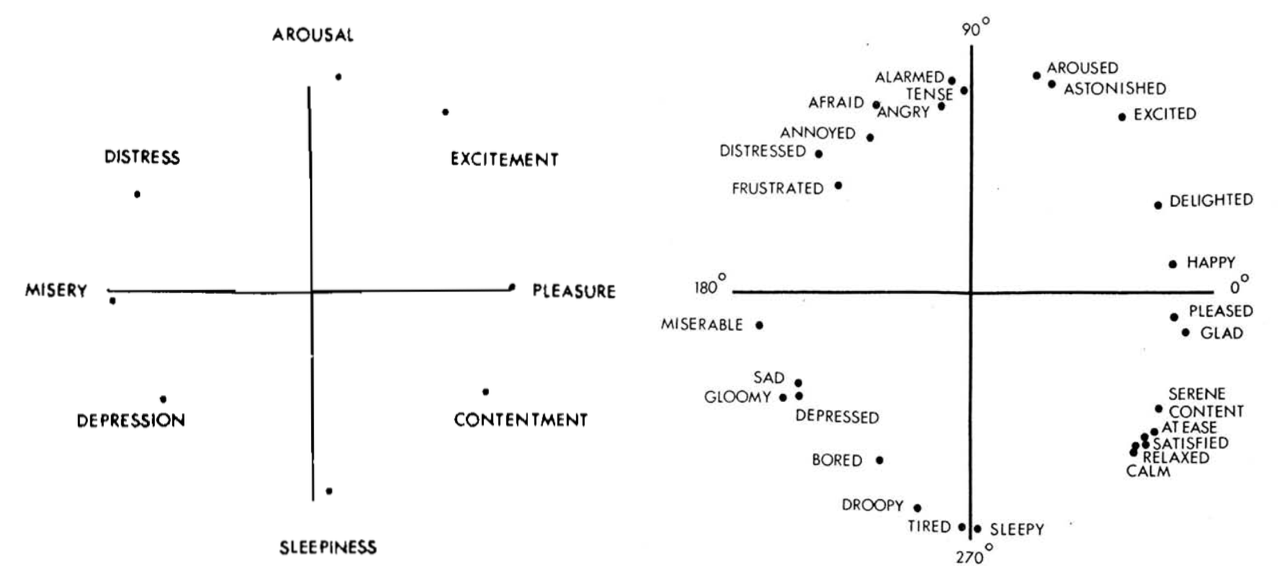

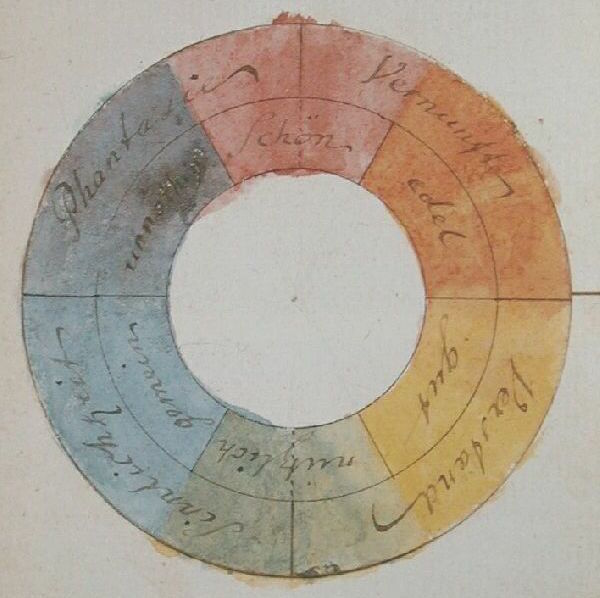

One commonly used tool is the 2-dimensional emotion space (2DES), consisting of an axis of arousal and an axis of valence. Arousal denotes the energy, with passive emotions such as sadness having a low arousal, and energetic emotions such as surprise having a high arousal. Valence represents the positive and negative scale of the emotion, with emotions such as happiness on one end, and disgust on the other end of a good–bad, pleasurable–unpleasurable axis. The 2DES scale is supported by J. A. Russell (1980) and his circumplex model of emotions (Figure 5), in which emotions are mapped onto a circle with pleasure at 0 °, excitement at 45 °, arousal at 90 °, distress at 135 °, displeasure at 180 °, depression at 225 °, sleepiness at 270 °, and relaxation at 315 °.

J. R. Fontaine et al. (2007) show in their comprehensive study that two dimensions are not enough to capture the similarities and differences in the meaning of emotion words. They suggest instead that four dimensions are needed: evaluation–pleasantness, potency–control, activation–arousal and unpredictability. Practically, for interfaces and reporting, four dimensions are sometimes too much, and this is a reason for the popularity of the two-dimensional model. 2DES can be used to continuously report emotions, even on an evolving task, as demonstrated in the Representations section of the Input chapter.

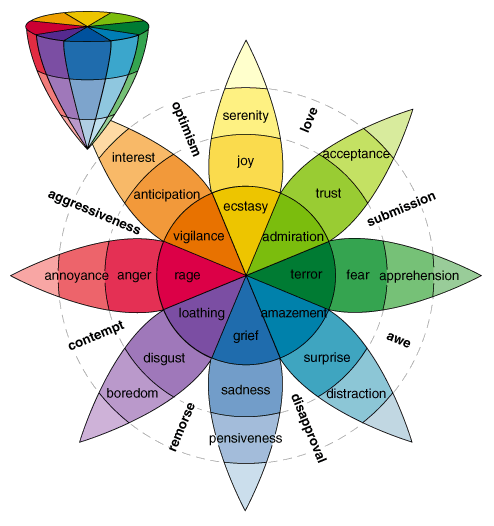

2.2.3 Alternative frameworks

Plutchik (2001) has created a three-dimensional circumplex model of emotions. In this approach basic emotions are placed similarly to colors on a color wheel, similar emotions next to each other, and opposites 180 degrees apart (Figure 6). The work is based on analyzing and grouping hundreds of emotion words and trying to organize them. From the circumplex model emotions can be picked like colors from a color-wheel, combining different basic emotions to yield composite emotions on a continuous scale, similarly to a color gradient. In this model, eight basic emotions are used. They are organized into opposite pairs: rage–terror, vigilance–amazement, ecstasy–grief and admiration–loathing. On the third, depth dimension intensity of the emotion is represented, resulting in a three dimensional cone mapping of emotions.

Frijda (2004) has developed a view that relates emotions directly with actions, or more accurately action tendencies. Emotions are motivations and action readiness, manifested in the strong physical reactions and quick decisions characterizing passionate emotions: such as anger or lust. Frijda takes the idea furher, and considers that all emotions are characterized by certain motivations to act; for example admiration, fascination and being moved are not usually accompanied by strong reactions, but rather strive for being near the object that causes these emotions. A suggested list of action tendencies and related emotions include:

- approach – related to desire,

- avoidance – related to fear,

- being-with –related to enjoyment,

- attending – related to interest,

- rejecting – related to disgust,

- agonistic – related to anger,

- interrupting – related to surprise,

- dominating – related to arrogance, and

- submitting – related to resignation (Burkhardt et al. 2014).

Marvin Minsky (2007) believes that emotions are more complex mental processes than is commonly thought. He suggests that emotions might not be primal or basic at all from a structural or processing point of view, and the difficulty in describing emotions actually stems from their complexity, not the fact that they would be too basic and elemental to reduce into smaller factors. According to Minsky’s view, emotions are different “Ways to Think”, and the conscious emotion is just us paying attention to a thread or parallel process that is already running in our brain. He considers the mind to be a set of complex systems, and opposes views that consider the consciousness to be driven by a single identity, or self, and he seems to regard the phenomenological way to contemplate the mind from an individual point of view fruitless. Minsk, as an influential artificial intelligence researcher, is mostly interested in modeling and replicating mental processes, and his views draw inspiration of how modern computers work, with many subprocesses running simultaneously and switching back and forth from idle to active. In accordance with Minsky’s views, recent theories of brain functions rely on network and complexity theory. Also, current views on the entanglement of brain processes related to emotions and cognition support Minsky’s views on emotions (Pessoa 2008; Lindquist et al. 2012)

2.2.4 Unlabeled emotions

A possibility I want to bring forward in this thesis is something I call unlabeled emotions. By not labeling emotions in our models and while encoding and decoding data, we can preserve emotional information in emotion-related phenomena. By taking emotionally expressive forms of media and emotionally relevant information, we can create models that link information directly to expression, leaving the emotional impression and interpretation to ourselves. Many forms of media has emotion-eliciting content; people are often relating sounds, music, colors, images, video to emotions. This kind of information comes through different forms of communication.

Contextual and explicit information are present in the lyrics of a song, the object of the image and the scene of the video. For example a video from a war zone might elicit strong negative emotions of anguish, anxiety and fear due to the subject, while a picture of a wedding ceremony might induce feelings of love, wanting, even transcendence. This kind of information can be very subjective, for example the war video can also carry feelings of bravery and freedom for a guerrilla fighter, and the wedding picture might represent negative emotions of jealousy, anger and sadness for an alternative, unrequited suitor or a disappointed mother-in-law.

The emotional information might not be present in the subject at all, as even abstract art as seemingly meaningless patterns of color, sound and forms can elicit emotion. The importance in this case can fall to the timing and sequence of events, the symmetry and meaning contained in colors or pitches. This type of information can be culturally dependent and subjective. Different cultures assign very different meanings to, for example, colors. This culturally and personally dependent understanding of emotional meaning in expressions is evident in the way that we have it easier to communicate to people that are close to us culturally, or whose cultures we have been exposed to over an extended period of time, even when the importance of language-barriers is not relevant. Also, we are usually better at understanding the emotions of people that are close to ourselves personally, than we are at understanding strangers, which already suggests that emotional communication is personal, subjective, and an interactive effort – the emotional information is not easily quantified and standardized.

On the other hand, certain components of emotional information in media may well be very much built-in, stemming from evolutionarily early features, emotional grunts, movements and other expressions. Research is able to find universal or nearly universal components of language, prosody, movements and expression that are understood in the same way across cultures and continents. For example, Sievers et al. (2013) found that emotional interpretations of both music and movements share common features across cultures.

We have evolved to be extremely good at interpreting and reacting to emotions, as described in the next section, Empathy. Therefore it makes sense to leave the task of emotion interpretation to ourselves, instead of trying to create models that represent phenomena in the context of emotional descriptors, which are already difficult to conceptualize in themselves. Not needing to consciously describe and communicate emotions brings us closer to a natural situation, in which we are not forced to think about emotions abstractly, but only through experience, impression and expression.

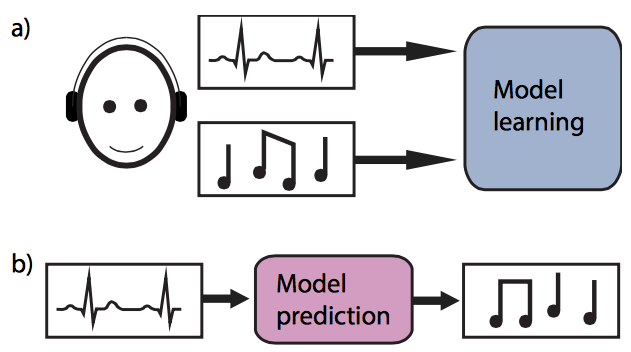

For example music is considered to convey a lot of emotional information and, as discussed earlier, there is substantial evidence that emotions and physiology are deeply linked. Therefore it should be possible to create a model that carries emotional information from physiology encode, transmit and decode it through music to be understandable. Later in this thesis I will present a project called Musical emotion transmission, which is an experiment trying to do exactly this.

2.3 Empathy

Empathy is an ability to understand another individual’s internal state by identifying the similarity of your own mind and feelings with the other, and through that similarity sharing the experience of the other while still clearly understanding the distinction between self and other. The idiom to place yourself in another’s shoes is an everyday description of empathy. Empathy is a natural, innate ability, not a cognitive skill that needs to be explicitly learned, but the empathic abilities do develop through interaction with others. Activation of empathic ability does develop through interaction with others. Also, the situation that gives rise to the empathic experience does not require active mental decomposition. Definitions of empathy vary, and sometimes it is explicitly defined as a group of processes related to affective reactions to affective states of other people. Others have chosen to separate empathy into two main concepts: affective empathy for reading other peoples emotions by experiencing them in yourself, and cognitive empathy for understanding the thoughts and feelings of other people as a mental process. (Decety and Jackson 2004; Walter 2012) The problems with this affective/cognitive division are substantial, but lengthier discussion on the topic is beyond the scope of this thesis. For the sake of practicality, I will contend to use the cognitive/emotional dichotomy.

The distinction between different empathy-related phenomena is not always clear, and the definitions are bound to change over time, but Walter (2012) proposes working definitions in which cognitive theory of mind, cognitive empathy – or affective theory of mind, and affective empathy are distinguished as different concepts with different neural underpinnings. Theory of mind is the ability to understand mental states of others, and to understand the concept that other people have their own consciousness, thoughts and feelings. Cognitive theory of mind refers to the ability to mentalize and understand the cognitive states of others. Cognitive empathy is the ability to mentalize and understand the affective states of others, without necessarily experiencing similar states yourself. Affective empathy is the ability to experience the emotions of others. Affective empathy is in part enabled by emotional contagion. Emotional contagion can be described as a “lower form” or one of the underlying mechanisms of empathy. It does not necessarily require you to consciously distinguish between your own feelings and those of others, but it is rather simply adopting the feelings of people around you. This kind of contagion occurs also in pack behavior like laughter and the spread of arousal and alertness in panic situations. Affective and cognitive empathy usually occur together, but their neural pathways are distinct and they can also occur separately – especially cognitive empathy is not necessarily accompanied by affective empathy in all situations. (Walter 2012)

Mirror neurons have spurred a lot of discussion after they were discovered in the premotor cortex of the monkey (Di Pellegrino et al. 1992). These neurons activate both when a monkey performs an action, and when a monkey observes another individual perform the same action. Experiments suggest that a similar neuronal matching system also exists in humans (Fadiga et al. 1995). This phenomenon has been attributed with natural mind-reading abilities, theory of mind and the ability to simulate actions without performing them. Mirror neurons are often related with a philosophical theory known as the simulation theory, which suggests that we understand mental states and their expressions by internally simulating the same state in ourselves. The opposing theory is the so-caled theory-theory, which suggests that we have an internal model of mental states and understand other individual’s mental states on the basis of a theory of mind. (Gallese and Goldman 1998; Walter 2012)

2.3.1 Empathy and evolution

Frans De Waal (2010) explains the evolution and development of empathy in his book The Age of Empathy: Nature’s Lessons for a Kinder Society. Aimed as a direct response against competition and selfishness–oriented views on biology and evolution, such as the one presented in Richard Dawkins’ The Selfish Gene, de Waal presents a comprehensive picture of cooperation and empathy in nature, and explanations why cooperation skills are essential to many species of animals. A strong myth has been that war and competition are essential and central to human life, but de Waal brings in evidence from psychology and neuroscience for the automatic and central nature of empathy and helpful tendencies. De Waal argues that selfishness and aggression are only capabilities of the human mind and certain genes in the genome, and equally, if not more important are genes for compassion, selflessness and empathy. By emphasizing selfishness in our world view we are actually fostering the development of those behaviors and capabilities, and instead if we focused more on the positive behaviors also present in biology and our nature, we would start enacting them more.

De Waal describes human empathy in three different layers. The first layer is emotional contagion, which was already discussed earlier. The second layer is feeling for others, which happens through bodily mirroring and is likewise common in other animals: chimpanzees who see other chimpanzees reach for a banana will stretch out their arms to express their support and understanding of the other’s predicament. The final level is targeted helping. It is the ability to get into another’s mind and be able to help in the right way for a given situation, like offering support for someone who is hobbling – it happens almost instinctively. This type of perspective taking and helping happens in animals, and for example chimpanzees will spend a lot of time consoling a member of their community who has lost a child. (De Waal 2010)

De Waal, as a primatologist, has studied chimpanzees extensively. Chimpanzees have a strong sense of ownership, and they do compete for resources. What de Waal has found out is that chimpanzees are also prone to sharing: when a chimpanzee acquires food, they start to share with their peers, and before not too long the whole chimpanzee colony has received their part. According to de Waal, this behavior is not limited to primates, but also evident in at least all the other mammals. It is not to say that empathy is uniform in animals, or that it would be an either – or capability that some animals possess, and others do not. Instead, empathy and emotions are a distinct set of evolutionary features that manifest in a variety of ways, and the further along the phylogenetic tree we look from humans, the more alien the forms of evolutionary empathy may seem to us. (De Waal 2010)

2.4 Music and emotions

Music and emotions are deeply linked, so far that music is often described as the language of emotions, and such descriptions are seldom met with objections or denials. Emotional experiences involving music are common for listeners and performers, and it is extremely typical for people to listen to music, to dance and to play music in order to influence their emotions – to feel happy, to experience sadness, and to concentrate or to get distracted.

Music expresses emotion, either intentionally or unintentionally, as listeners seem to perceive emotions in music without fail. The perception of the expressed emotion is somewhat consistent, that is to say when asking many listeners to report what emotion they perceive in a piece of music, they will respond similarly. The accuracy of the responses is not great, that is to say listeners report that they perceive similar emotions, but there exists variation in the nuances. This ability does not require musical training, the perception is similar for trained musicians as it is for musical novices. Interestingly, Sievers et al. (2013) conducted an experiment that suggests that emotional features in both music and movement are perceived similarly across cultures, in this case comparing subjects from the USA and an isolated tribal village in Cambodia. The range of emotions that can be expressed by music is also vast: at least happiness, sadness, anger, fear and tenderness can be reliably identified. (Juslin and Laukka 2004)

The ability of music to induce emotion in the listener, to make the listener feel a certain way is not considered to be as clear as music’s ability to express emotions. Music provides emotional experiences and it is used for mood-regulation through adolescence and adulthood (Saarikallio 2011). However, it is not clear which emotions music actually evokes, and how those emotions relate to emotions arising from other situations. Is for example musical fear the same emotional state that is felt from real fear because of a perceived threat? Positive feelings such as enjoyment, happiness, fascination, relaxation and curiosity are most commonly linked with voluntary music listening. Sadness is also a commonly reported feature in music, but at least some listeners report feeling good when listening to sad music – that musical sadness can be a positive emotion (Huron 2011). The cause of musical emotion induction is also not clear. Suggested components are musical structures’ acoustic similarity to emotional speech prosody, the build-up and breaking of musical expectations, arousal potential, emotion contagion, associations, mental imagery, and the social context. (Juslin and Laukka 2004)

2.5 Summary

The previous sections demonstrate how multifaceted the theoretical views on emotions can be – and how tricky the experience of emotions is to investigate experimentally. In terms of this thesis, understanding the core findings from literature promotes a deeper view into the topic of expressing, receiving and transferring information on emotions. The frameworks and theories of emotion should not be seen as excluding each other. Rather inspiration can be drawn from several theories at the same time, and it is up to the evaluation of applications to determine which tools and theories are most useful. From a practical perspective, making use of the findings on physiological correlates of the emotional experience is very important. Also, the expression of emotions through the body, face and forms of media, such as music can be utilized.

II The Protocol

The second part of my thesis is about the emotion transfer protocol itself, why it is needed, what it is based on, and how we can begin constructing it. The general concept is divided into three components: Input, Output and Transmission, organizing the available knowledge and providing suggestions for transmitting emotions. In the last chapter of this part Conclusion and proposal, I synthesize the information presented earlier in this thesis and reflect on the possibilities for constructing an actual protocol.

3 Input

Fields of affective neuroscience and affective computing have explored reading emotions extensively, and with the use of physiological sensors, cameras, observation and self-reporting we have a wide starting point. In this chapter I will go over different possibilities for gathering emotional information from a user, but extracting emotional content from the gathered data, or encoding, will be mostly dealt with in the Transmission chapter.

3.1 Reporting

The simplest way of emotional communication is simply stating an emotion. This approach can be used in verbal communication, e.g. “I am very angry with you!” or “I feel so happy”, and it is common when a strong exclamation of emotion is intended. This type of communication is very simple from a transmission standpoint, as the input is the same as the output.

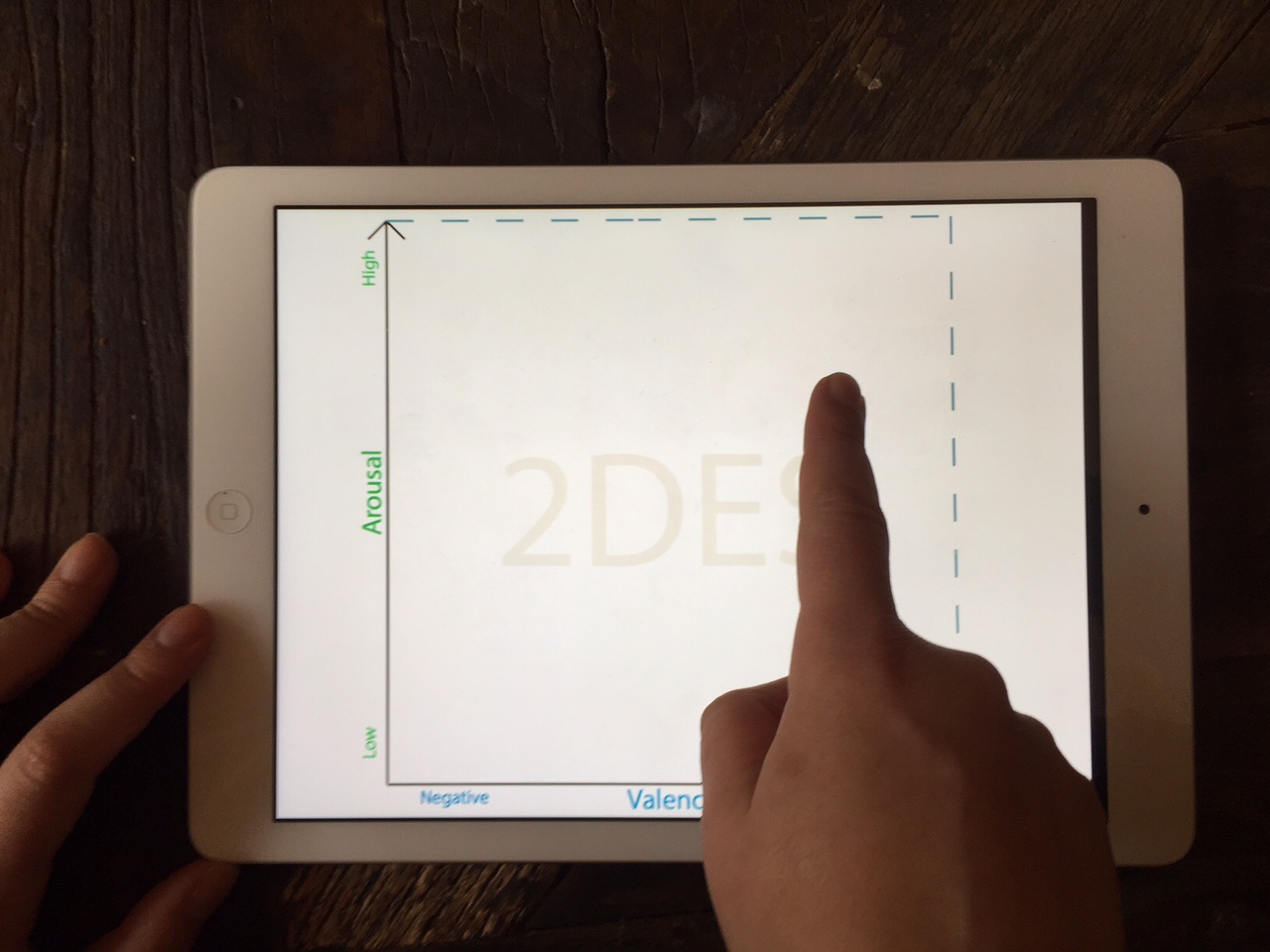

Similar to verbal emotional reporting, researchers use different types of questionnaires for gathering information about the emotions subjects are feeling. These questionnaires usually follow the previously discussed Emotional frameworks, namely Categorical emotions and Dimensional emotions. Dimensional emotions have also been used for real-time input to rate the emotional content in continuous media, such as video and music. The 2-dimensional emotion space is an especially useful tool. Because of the two-dimensional layout, pen and paper, a mouse cursor or a tablet computer, as in Figure 7, can be easily used to input emotion on the 2DES.

3.2 Expressions

In this section I will give an overview of aspects of the human emotional expression that are immediately apparent and partly controllable by the person herself. These include facial expressions, body posture and movements, as well as vocal cues that contain emotional information.

3.2.1 Face

The face conveys a large amount of emotional information, and it is one of the primary sources of this information in offline social situations. Video or still image of the face is powerful in itself, and it is a reason for the relative popularity of video conferencing platforms. Facial expressions are one of the starting points for the theory proposing that a set of “basic” emotions are built-in to humans and animals, as explained in Categorical emotions.

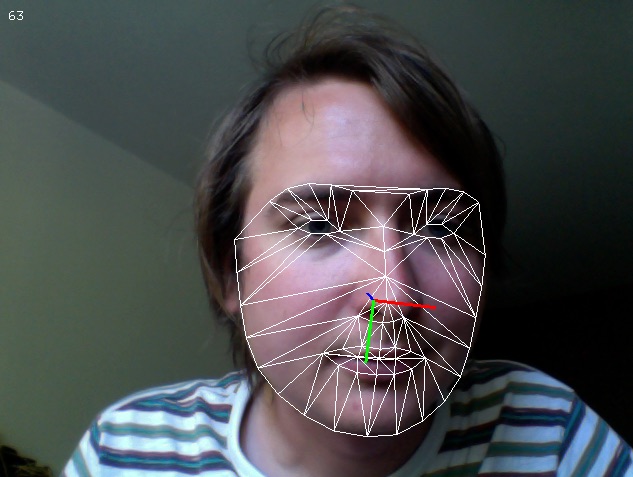

To start working with facial data in interactive applications, we need a system for tracking facial features, such as the position and shape of the mouth, the lips, eyes and eyebrows. This can be achieved using a regular web cam and a set of image processing algorithms. A popular algorithm for facial feature tracking is known as deformable model fitting by regularized landmark mean-shift (J. M. Saragih, Lucey, and Cohn 2011). The model requires a set of training images that have been manually annotated to show the different points of each face. One such set is the MUCT face database (Milborrow, Morkel, and Nicolls 2010).

FaceTracker is a mature open source project implementing this algorithm with the help of the image-processing library OpenCV. It includes wrappers for OpenFrameworks, Python and Cinder, as well as a standalone application, FaceOSC, for transmitting the data with Open Sound Control -messages for easy access in many programming environments. An example of the data FaceTracker can extract is seen in Figure 8. Another project with partly the same authors, focusing on the use of the algorithm for avatar animation is CSIRO Face Analysis SDK (Cox et al. 2013). Clmtrackr is an implementation of the algorithm in JavaScript, utilizing WebRTC for capturing the user’s webcam and WebGL for image processing, capable of running in an internet browser.

3.2.2 Body

Emotions are expressed in the body by postures and gestures. An energetic gesture, such as the fast movement of the hands accompanies high energy emotions, like joy and terror. A closed countenance with the shoulders together and forward is a sign of sadness or discomfort, while an open countenance shows happiness and comfortability. Motion tracking in physical space can be approached with different methods, such as magnetic, mechanical, acoustic, inertial and optical tracking, and their combinations (Bowman et al. 2004). I will give overviews of optical tracking with Microsoft Kinect and inertial tracking with motion sensors, as these methods are readily available, economical and represent two different use cases: tracking in a room and mobile tracking.

Accurate tracking of the body used to be difficult, expensive and required special markers or a special motion-tracking suit. The release of the Microsoft Kinect device in 2010, and the subsequent release of open source and official SDK’s for it, made motion tracking much more affordable and accessible. The Kinect and other similar devices use a depth camera and machine learning to analyze the position and orientation of the limbs of one or several people at the same time. There are a few limitations with this kind of technology: it does not work well in sunlight because infrared light is used to create the depth map, and it requires the physical placement of the device in a suitable location in relation to the user, so that the camera can see the whole person without occlusion. This makes it unfit especially for mobile applications.

Attaching motion sensors to the body is another way of tracking movement. The three most common motion sensors are accelerometers, gyroscopes and magnetometers. An accelerometer can detect the direction and strength of an accelerating force applied to it. A gyroscope detects the rotations that it is exposed to. A magnetometer detects magnetic fields, and in usual movement tracking is used to find the absolute orientation related to the earth’s magnetic field. A combination of an accelerometer, gyroscope and sometimes a magnetometer is known as an inertial measurement unit (IMU). An IMU provides data regarding its movement and orientation. Position data can be derived with varying accuracy depending on the complexity of the analysis algorithm and the quality of the sensors. Motion sensors are included and accessible with official or unofficial SDK’s in several consumer devices, such as activity monitors (Fitbit etc.), game controllers (Nintendo Wii, Blobo), as well as most smart phones. Also, inexpensive integrated electronic components that can be used together with microcontrollers, such as the Arduino, are available with the trade name IMU or simply as accelerometers, gyroscopes and magnetometers, or digital compasses.

3.2.3 Voice

We as listeners are good at inferring the emotion in a voice. Anecdotally, it is easy to recognize distress or excitement in another person’s voice, and to assess the sincerity of the emotion portrayed. Empirical studies have shown that the prediction accuracy is around \(50 \%\) and significantly above chance level, when picking from a set of five basic emotions: fear, joy, sadness, anger and disgust. The studies seem to also suggest that there is a difference in how well different types of emotions are distinguished. The recognition is best for sadness and anger, followed by fear and joy, and disgust is recognized rather poorly. Unsurprisingly, when using a larger set of possible emotions for the classification, similar emotions are more often confused together, for example pride is confused most easily with elation, happiness and interest. (Pittam and Scherer 1993; Banse and Scherer 1996)

Early work in attempting to classify the acoustic qualities of emotions looked into different vocal profiles and patterns in the fundamental frequency (F0) during articulation (Banse and Scherer 1996). Newer work explores a large amount of acoustic features modeled with machine learning methods, such as Gaussian Mixture Models, Hidden Markov Models and Support Vector Machines. Even the newer approach is not yet very good at evaluating natural data, as they perform only marginally above chance level with the most naturalistic data sets. Open source tools that can be used for this type analysis include OpenEAR, developed in the Technical University of Munich, and its newer incarnation OpenSMILE. OpenSMILE supports incremental real-time processing, and is therefore suitable for interactive applications. (Schuller et al. 2009; Eyben et al. 2013)

3.3 Physiology

In this section I will consider the autonomous physiological features that can be used for input in an affective computing setting. These features are not immediately under conscious control, and for the most part not apparent on the outside. Physiological signals originate in either the central nervous system, or the peripheral nervous system. The central nervous system consists of the activity of the brain and the spinal cord. Modern neuroscience considers that the central nervous system is the control center of the organism, and as such contains a vast amount of processes that represent and control basically all the activity of the body and mind. The peripheral nervous system consists of the autonomic nervous system, which is in charge of the autonomous control of bodily organs, and the somatic nervous system which is in control of the sensory and motor nerves. The autonomic nervous system is further divided into the sympathetic and parasympathetic nervous systems. The sympathetic nervous system is responsible of stimulating the fight-or-flight response of the body, and the arousal features of emotions can be tracked from sympathetic activity. The parasympathetic nervous system is complementary to the sympathetic nervous system, and is responsible for the activity of the body in states of rest and contention, and more complex emotional dimensions can be traced to parasympathetic activity, as it stimulates processes such as sexual arousal, tear gland activation and salivation. (Tortora and Derrickson 2006)

3.3.1 The Brain

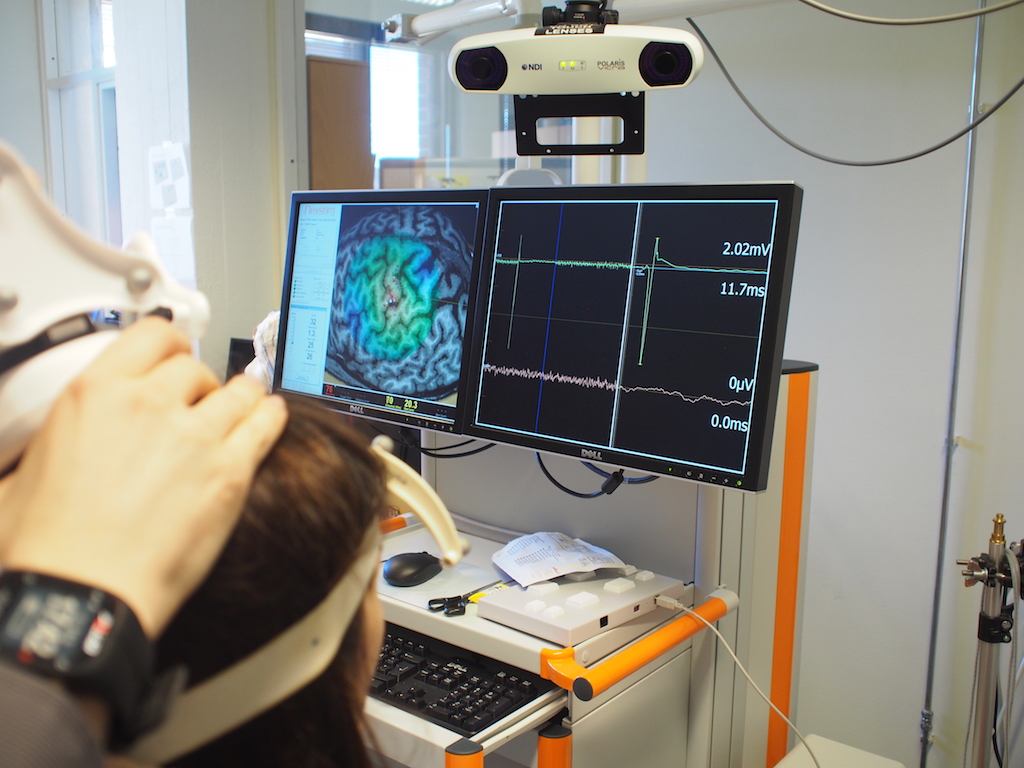

Neuroscience uses different tools for different purposes, ranging from functional magnetic resonance imaging (fMRI) to electroencephalography (EEG). FMRI is what is typically used in research presenting “brain scanning” images. It is a state of the art technique that is used to take 3-dimensional images of changes in blood flow inside the brain. It can be used to research the function of different brain areas, and connections between them. FMRI also requires a very strong magnetic field, and because of this it is not portable or safe to use outside of the laboratory. EEG is a traditional method for measuring brain activity from the electrical potential across the scalp. It has a long history, with animal electricity being discovered in 1791 by Galvani, the electrical activity of the brain surface by Caton in 1875, and finally the first scalp recordings of human EEG were published in 1929 by Hans Berger. (Swartz and Goldensohn 1998)

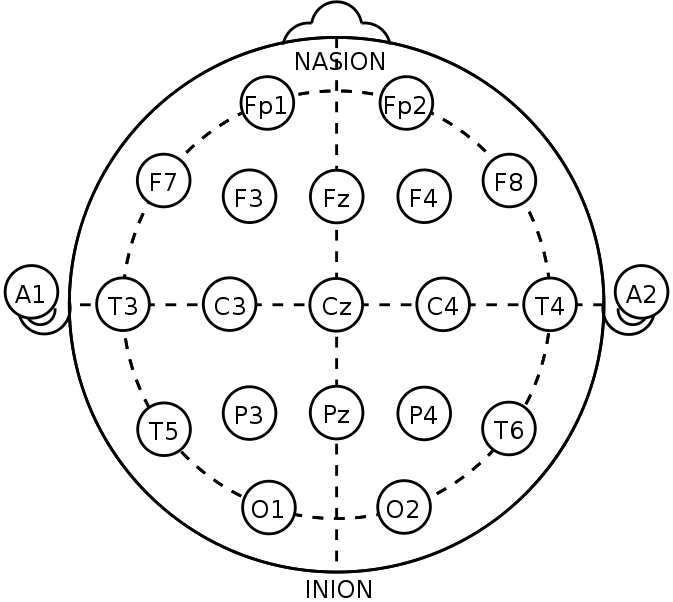

The electric signal measured by EEG stems from the activity of brain cells, or neurons. Neurons communicate via electrical impulses, known as action potentials that travel across pathways, or synapses, between the neurons. A large population of neurons produce an effect that can be measured from the scalp with sensitive electrodes and amplifiers. EEG measurement produces a continuous signal from each electrode used, representing the potential difference, or the voltage, between the electrode and a reference point. Typical reference points are areas that are considered to have a neutral electric activity, such as the ear lobes, the nose, the mastoid bones or an average of many electrodes. EEG measurements can be done with one or several, sometimes up to 256 electrodes (Oostenveld and Praamstra 2001). An international standard for electrode locations, known as the 10-20 system (Figure 9), has been defined for consistency, and should be used as a reference when applying electrodes for a known application (Jasper 1958; Homan, Herman, and Purdy 1987).

Due to the distorting effect of the skull, and the small amount of electrodes used, what is actually being captured by the EEG signal is caused by different parts of the brain, producing a lot of background activity, apart from a specific phenomena that may be of interest to a researcher. This makes the EEG data inherently noisy, and a single effect, or the location within the brain that produces a signal is not easy to extract from the data. Source localization of EEG signals is a field of study in and of itself (Koles 1998). On the other hand, the EEG measurement has a very good temporal accuracy, and phenomena can be detected and analyzed on a millisecond-scale.

The EEG signal can be processed in two distinct ways. Single, accurate responses known as event-related potentials (ERP’s) are features of the signal that can be reliably produced with certain stimuli and patterns. Oscillations are observations in the frequency domain of the signal, and are typically studied as the relative power of different frequency bands.

Event-related potentials are used to study phenomena such as attention, memory and the processing order of sensory stimuli. Thanks to the high temporal accuracy of EEG, single atomic stimuli can be accurately tracked to certain features in the signal – these features are known as ERP’s. By varying parameters in the stimuli, different features have been identified that correspond to different sensory and mental phenomena. Due to a high signal-to-noise ratio, most ERP’s are studied by averaging multiple trials, thus reducing the amount of noise due. Single-trial ERP measurements are also possible, and they have been utilized in for example brain-computer interfaces.

Frequency analysis of EEG is based on calculating a Fourier transform of the EEG signal and analyzing the relative power of different frequency bands. The frequencies are typically divided into Delta, Theta, Alpha, Beta and Gamma bands, of which the Alpha band is the most commonly used as it is a reliable indicator of relaxation. The frequency bands can also be used to study the localization of effects by comparing the relative powers across different electrodes. A common technique used in emotion studies is measuring frontal and parietal hemispheric differences, especially as measured in the alpha band and from the electrodes P3 and P4 of the international 10-20 system (Crawford, Clarke, and Kitner-Triolo 1996). Some of this research suggests that relatively lower right hemispheric alpha activity is indicative of negative emotions, and lower left hemispheric alpha activity is indicative of positively valenced emotions, both in experiments where emotions are triggered by self-suggestion and by listening to music (Crawford, Clarke, and Kitner-Triolo 1996; L. A. Schmidt and Trainor 2001). Coan and Allen (2004) reviewed over 30 studies of EEG asymmetry, and identified compelling evidence for the role of EEG asymmetry as an emotion moderator, but noted that their review does provide entirely conclusive results about the reliability of using EEG asymmetry for identifying emotional states.

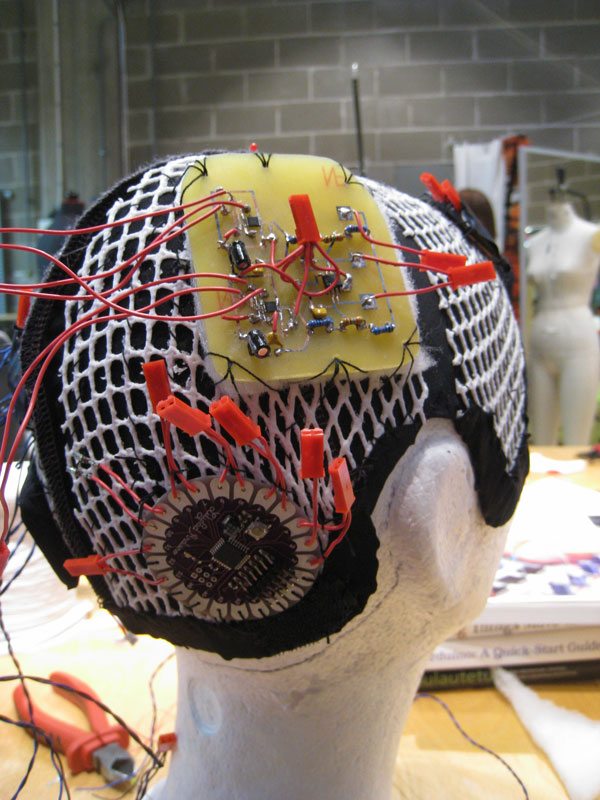

Laboratory equipment is not necessary for using EEG in interactive projects. A number of open source and commercial projects attempt to bring EEG to the hands of hobbyists and consumers. OpenBCI consists of an open hardware licensed 8 EEG-channel wireless measurement device, sold assembled on their site, and accompanying firmware and an analysis software known as OpenViBE, created for realtime processing of the EEG signal in a visual data flow environment. OpenEEG is a long-running project for gathering information about building EEG devices and analyzing the data; they offer reference designs and a long list of software for EEG analysis, some of it a bit outdated. Companies selling commercial EEG devices aimed at consumers and developers are Emotive and Neurosky. The relative simplicity and affordability of EEG measurement devices makes it a popular tool for interactive projects, and it has been used extensively for interactive art, musical instruments and brain-computer interfaces.

3.3.2 Skin conductance

Skin conductance (SC) is a method for measuring the activity of topical sweat glands. When you get nervous, you may actually feel your palms getting sweaty. This is caused by endocrine sweat gland activation. These types of sweat glands are densely located in areas of the face, palms, wrists and foot soles. The sensation of sweaty palms is an extreme case; even very small activations can be sensed by measuring the difference in conductance between two points on the surface of, for example, the palm – as skin gets sweatier, it conducts electricity better, and as skin gets dryer, the resistance increases.

Skin conductance is used to evaluate sympathetic nervous system activation. This means that it contains information about the arousal level of the individual and it is linked to the so-called fight-or-flight response, but it seems not to contain much information about emotional valence. Skin conductance, or skin resistance, is used frequently in polygraphs, and the signal is highly diagnostic of truthfulness or deception. A recent study, in which skin conductance was measured for long periods outside of the laboratory, shows that SC activity can differ a lot between the left and the right side of the body when measured simultaneously (Picard, Fedor, and Ayzenberg 2015). The authors suggest a theory of multiple arousals, in which different parts of the brain affect the SC in different parts of the body. This new theory could be used to more accurately map the relationship between emotional experience and arousal across the body.

Two separate features in the skin conductance signal are usually analyzed. One is the skin conductance level (SCL), and another is the skin conductance response (SCR). The SCL is a slowly changing average level of the skin conductance signal, sometimes measured only from the points which do not contain any SCR’s. SCL level and its current direction can be used as a measure of stress or arousal, with relaxation producing a decreasing and activation an increasing SCL. The SCR is a feature in the skin conductance signal, in the form of a quick increase followed by a slow decrease in conductance. The SCR has also been referred to as a startle response, because it can be reliably elicited by presenting a sudden unexpected stimulus, such as a loud sound, but it quickly diminishes if the stimulus is repeated. SCR’s also occur spontaneously, known in that case as non-specific SCR’s, and their occurrence rate can also be a measure of interest.

In professional settings, signal amplifiers and filters are used to increase the signal quality. Thankfully, the skin conductance signal is not very noisy itself, and in an experimental setup it can be measured with a simple resistive voltage divider. The resistance of the skin is typically in the range of \(0.5 - 1.5 M \Omega\), and the voltage divider circuit can be constructed by thinking of the skin between two electrodes as one resistor, and using a \(1 M \Omega\) resistor as the other. The equation to calculate the skin conductance from the output voltage can be seen in Equation \(\ref{voltagedivider}\). \[ \begin{equation} \label{voltagedivider} V_{out} = \frac{R_2}{R_1 + R_2} * V_{in} R_1 = R_2*(\frac{1}{V_{out}} * V_{in} - 1) \end{equation} \] The analysis of skin conductance can be approached in different ways, but a starting point is identifying the SCR’s, and their features such as amplitude, rise time and decay time. A startle response detection algorithm, as described by Healey (2000), attempts to find a significant rise in the skin conductance signal to signify the beginning of a SCR, and subsequently determine the maximum amplitude by finding the change in signal direction. My real-time compatible implementation of the algorithm for Python can be found at https://github.com/vatte/rt-bio/blob/master/physiology/SkinConductance.py. The SCR’s can then be utilized as either single events, or by determining their frequency over a period of time. Once the SCR’s are identified, the SCL can be calculated from the signal at points where no SCR is ongoing.

3.3.3 Electric activity of the heart

The heart muscle produces a strong electrical signal when it pumps blood to the circulation. This signal is known as the electrocardiogram (ECG). By placing two electrodes on the left and right sides of the heart, the electric signal can be recorded. Typically electrodes are placed across the chest, such as in heart rate monitors used for jogging, but alternate placements are also possible, for example in both forearms. The ECG represents the cardiac cycle as a signal consisting of different features, labeled P, Q, R, S and T. The R peak is usually the most interesting of these features, as it is a large spike and can be used to determine the heart rate very accurately.

The heart rate or inter-beat interval (IBI) itself is an indicator for certain states, and an exited heartbeat when feeling strong emotions is a common occurrence. Heart rate is also very susceptible to exercise and movement, and as such it can be difficult to differentiate what effects are due to other reasons than mental processes. Because of the high accuracy of measuring heart rate with ECG, another feature known as heart rate variability (HRV) can also be studied. HRV is the change in length between successive heart beats, \(IBI_{current} - IBI_{previous}\). The HRV chain can be analyzed in the frequency domain as well as with statistical measures, such as standard deviation. HRV is seen as an indicator of parasympathetic nervous system activity, and abnormal HRV’s have been related to stress and mortality.

3.3.4 Other physiological measurements

A simple way to measure heart rate is using photoplethysmography. By shining a light through an area of the body with a relatively large amount of blood vessels and relatively soft tissue, such as a finger or the ear lobe, the pressure changes in the blood stream can be measured with a light sensor. In its simplest form this can be achieved with a light-dependent resistor (LDR) and a light-emitting diode (LED). By adding an infrared sensor and an infrared LED, the oxygen or red blood cell level in the blood can also be measured, by seeing the difference in the clear light and infrared light measurements, due to red blood cells absorbing the infrared light more effectively.

Respiratory inductance plethysmography is the measurement of breathing from the varying circumference of the chest, or thorax, and the stomach. The varying circumference is caused by the filling and emptying of the lungs. This type of sensor can be made for example from a fabric stretch sensor. The signal can be analyzed by observing the changes in direction, or the changing of sign of the signal’s delta: breathing out begins when the circumference reaches its maximum, and breathing in begins when the circumference reaches its minimum value.

4 Transmission

Transmission generally means taking information, transforming it into a format best suitable for the requirements of the transmission, such as speed, size and reliability, and conveying it from the sender to the receiver. Emotionally relevant data takes multiple forms, and there is no consensus over the best format that emotions should be transferred in. To try to keep the options for the protocol as unlimited as possible, I will present different strategies for encoding input data and decoding it into a format suitable for output, while preserving the most relevant signals. Encoding and decoding are approached from two different points of view: machine learning models use existing data to construct statistical models of relationships, and rule-based models apply a theory, an idea or scientific knowledge as fixed rules governing system behavior.